Chapter 8. Assessments, evaluations and how they are used

This chapter examines four evaluation and assessment activities in particular: student assessments, data-collection practices, school accountability and improvement actions at school. It discusses how school systems use the information they gather from these evaluations and assessments. The ways school systems use this data are then correlated with student performance and equity in the education system.

Evaluation and assessment, as discussed in this chapter, refers to the policies and practices through which education systems assess student learning, and evaluate teacher practices and school outcomes. It also conveys how education systems and schools use the results from assessment and evaluation to improve classroom processes and student learning.

The OECD Review on Evaluation and Assessment Frameworks for Improving School Outcomes identifies the hallmarks of strong evaluation and assessment systems (OECD, 2013[1]). These include setting clear and ambitious goals or standards for what is expected of students, schools and the system overall, and collecting reliable data to measure the extent to which goals are being met. In addition, in strong evaluation and assessment systems, students, teachers, schools and policy makers receive the feedback they need to reflect critically on their own progress, and remain engaged and motivated to succeed.

In this chapter, four evaluation and assessment topics are covered (Figure V.8.1): student assessment, data-collection practices, school accountability and improvement actions at school.

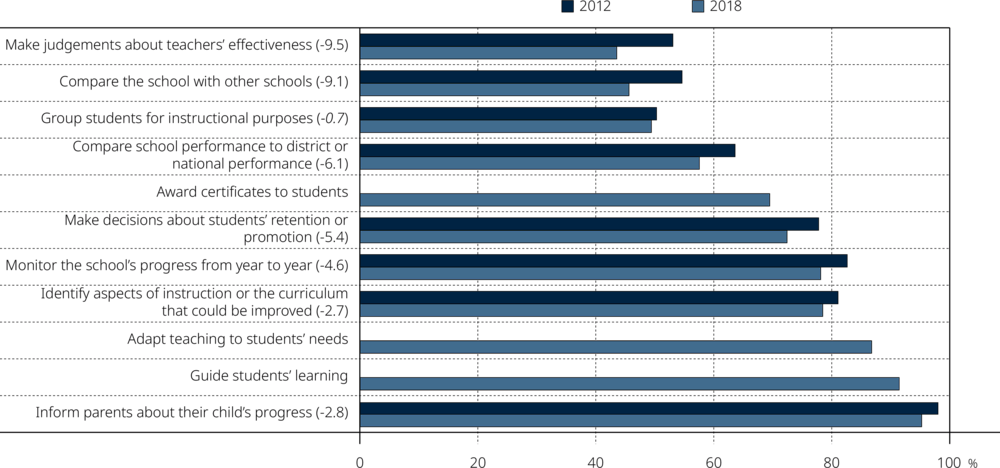

On average across OECD countries, the prevalence of using student assessments for various purposes declined between 2012 and 2018. For example, in 2012, 55% of students were enrolled in a school that compares its performance with that of other schools, while in 2018, 46% of students attended such a school. Similarly, in 2012, 53% of students attended a school that uses student assessments to make judgements about teachers’ effectiveness, while in 2018, 44% of students attended such a school.

Some 38% of students were enrolled in schools that post achievement data publicly, on average across OECD countries. These students scored five points higher in reading, on average across OECD countries, than students in schools that do not post data publicly, even after accounting for students’ and schools’ socio-economic profile. At the system level, however, the incidence of posting achievement data publicly was not correlated with mean performance in PISA, nor with equity in performance.

On average across OECD countries, students in schools whose principal reported that their school seeks written feedback from students scored better in reading than students in schools that do not seek written feedback, even after accounting for students’ and schools’ socio-economic profile. In addition, equity in student performance tended to be greater amongst countries and economies that have a higher percentage of students in schools that seek written feedback from students.

High-performing countries and economies tended to have more teacher mentoring on the school’s initiative. In those systems, more schools implemented a standardised policy for reading-related subjects taught at school (including a school curriculum with shared instructional materials, and staff development and training) based on district or national policies.

Countries and economies tended to have better equity in education when they: use student assessments to inform parents about their child’s progress; use student assessments to identify aspects of instruction or the curriculum that could be improved; use written specifications for student performance on the school’s initiative; seek feedback from students; and have regular consultations on school improvement at least every six months, based on district or national policies.

Student assessment refers to judgements on individual students’ progress and achievement. It covers school-based assessments designed by teachers or other staff at school, as well as large-scale external assessment and examinations (OECD, 2013[1]). Student assessments can have different purposes (Rosenkvist, 2010[2]; Lesaux, 2006[3]; Looney, 2011[4]). For example, formative assessments, or assessments for learning, do not have consequences for students, but instead aim to provide feedback to help students progress on their learning path (Shepard, 2000[5]; Hattie and Timperley, 2007[6]). Summative assessments, or assessments of learning, summarise and certify achieved learning and are sometimes used to make high-stakes decisions for students or teachers, such as promoting or retaining students, or grouping students by their achievement level.

PISA 2018 asked school principals about the purposes of the student assessments used in their school. On average across OECD countries, student assessments were most often used to inform parents about their child’s progress (95% of students were in schools whose principal reported that assessments are used for this purpose), to guide students’ learning (91%), and to adapt teaching to the students’ needs (87%) (Figure V.8.2). Student assessments were also commonly used to identify aspects of instruction or the curriculum that could be improved (78%), to monitor the school’s progress from year to year (78%) and to make decisions about students’ retention or promotion (72%). Less than half of students were in schools that use student assessments to group students by ability, to compare the school to other schools, or to make judgements about teachers’ effectiveness, on average across OECD countries.

According to school principals, using student assessments for formative purposes was common in nearly all PISA-participating countries and economies. Three items included in Figure V.8.3 are measures of formative student assessment:

In 2018, at least 70% of students in every participating country and economy were enrolled in schools that use student assessments to guide student learning (Table V.B1.8.1).

Similarly, in 68 out of 79 countries and economies at least 70% of students were enrolled in schools that use student assessments to identify aspects of instruction or the curriculum that could be improved.

In addition, in 74 countries and economies, at least 70% of students attended a school that uses student assessments to adapt instruction to students’ needs.

By contrast, the incidence of using student assessments to make high-stakes decisions for either students or teachers was somewhat lower:

In only 22 countries and economies were there at least 70% of students enrolled in schools that use student assessments to group students for instructional purposes.

In 36 countries and economies, at least 70% of students were enrolled in schools that use student assessments to make judgements about teachers’ effectiveness.

In 58 countries and economies, at least 70% of students were enrolled in schools that use student assessments to make decisions about retaining or promoting students.

On average across OECD countries, the practice of using student assessments to make judgements about teachers’ effectiveness declined by ten percentage points between 2012 and 2018, using student assessments to compare the school with other schools declined by nine percentage points, and using student assessments to make decisions about students’ retention or promotion declined by five percentage points (Table V.B1.8.1). Of all the purposes of student assessments included in Figure V.8.2, using student assessments for grouping students for instructional purposes – a practice that remained stable over the period (50% of students attended schools that use student assessments for this purpose) – was the only practice that did not decline over the period, on average across OECD countries.

The prevalence of using student assessments for specific purposes was similar between socio-economically advantaged and disadvantaged schools, with some exceptions. On average across OECD countries, using student assessments to group students for instructional purposes, to adapt teaching to students’ needs and to monitor the school’s progress from year to year was more common amongst disadvantaged than advantaged schools (Table V.B1.8.2).

PISA measures of the purposes of student assessments included in Figure V.8.2 were unrelated or only weakly related to reading performance (Table V.B1.8.4). On average across OECD countries, schools that showed higher performance tended to use student assessments to make decisions about their students’ retention or promotion, after accounting for students’ and schools’ socio-economic profile. In contrast, schools that showed lower performance tended to use student assessments to guide their students’ learning or adapt teaching to their students’ needs, on average across OECD countries. However, these results were not consistent across countries, showing both positive and negative associations.

PISA 2018 asked school principals whether their school collected data on student outcomes, specifically on students’ test results and graduation rates. On average across OECD countries, some 93% of students were in schools whose principals reported that they systematically record students’ test results and graduation rates (Figure V.8.3). Only in Austria, Brazil, Finland, Greece, Luxembourg and Switzerland was the share of students in schools that carried out systematic recording of student outcomes lower than 85%.

On average across OECD countries, about half of students were in schools whose principal reported that collecting data on student outcomes is mandatory (e.g. based on district or ministry policies), and 42% were in schools where data is collected on the school’s initiative (Figure V.8.2). In most countries and economies, most students were in schools where the collection of data on student outcomes is mandatory; but in 20 countries and economies, more than half of students were in schools where data on student outcomes is collected on the school’s initiative. In Beijing, Shanghai, Jiangsu and Zhejiang (China) (hereafter “B-S-J-Z [China]”), Hong Kong (China), Italy, Lithuania and Macao (China), more than 70% of students were in schools where data on student outcomes is collected on the school’s initiative, as opposed to being based on mandatory governmental policies.

The systematic recording of student outcomes was more prevalent in 2018 than in 2015 in Italy, Kosovo, Lithuania, Montenegro, the Republic of North Macedonia (hereafter “North Macedonia”) and Switzerland, and was less prevalent in Brazil, Greece, Iceland, Luxembourg, Malta and Slovenia (Table V.B1.8.12).

On average across OECD countries, the prevalence of collecting data on student outcomes was similar, regardless of the socio-economic profile of the school, but differences were observed in some countries. In Austria, Belarus, Brunei Darussalam, Georgia, Iceland, Peru and Spain, collecting data on students’ results and graduation rates was more prevalent in advantaged schools, while in Denmark, France, Kazakhstan, Lithuania, Luxembourg and North Macedonia, this practice was more prevalent in disadvantaged schools (Table V.B1.8.13).

On average across OECD countries, students in schools that systematically record students’ test results and graduation rates scored better in reading than students in schools that do not collect this kind of data (a difference of six score points). After accounting for students’ and schools’ socio-economic profile, students in schools that collect data on student outcomes scored better in reading, on average across OECD countries (a difference of seven points) and in seven countries and economies (Figure V.8.4).

PISA 2018 asked school principals whether their school uses achievement data for accountability purposes, and if so, how. Three forms of school accountability were considered: providing achievement data to parents directly, posting achievement data publicly (for example, in the media), and tracking achievement data over time by an administrative authority. Achievement data, as defined here, refers to aggregated school or grade-level test scores or grades, or graduation rates.

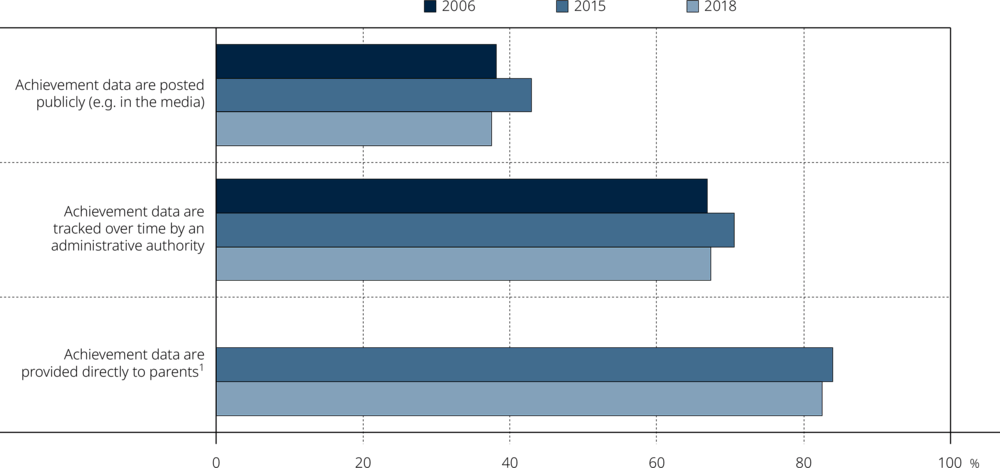

On average across OECD countries in 2018, about 83% of students attended schools that provide achievement data to parents directly (Figure V.8.5). This is the most common form of school accountability considered in PISA. In all countries and economies except Austria, at least half of students were enrolled in schools that provide achievement data to parents directly (Table V.B1.8.7).

Posting achievement data publicly (for example, in the media) is the least common form of school accountability, on average across OECD countries (38% of students attended schools that do this) (Figure V.8.6). In 58 out of 79 education systems, less than half of students were in schools that post achievement data publicly (Table V.B1.8.7). Posting data publicly was more common amongst advantaged schools (43% of students attended such schools) than disadvantaged schools (34%), and more common in urban (39%) than in rural (33%) schools, on average across OECD countries (Table V.B1.8.8).

The earliest cycle in which PISA collected information on school accountability was PISA 2006. On average across OECD countries, there was no change between 2006 and 2018 in the share of students in schools that post achievement data publicly (Figure V.8.6). In Brazil, Bulgaria, Indonesia, Ireland, Israel, Norway, Portugal, the Slovak Republic, Slovenia and Turkey, posting achievement data was more prevalent in 2018 than in 2006; but in Croatia, the Czech Republic, Estonia, Luxembourg, Montenegro, the Netherlands, the Russian Federation, Sweden, Chinese Taipei and the United Kingdom, it was less prevalent in 2018 than in 2006 (Table V.B1.8.7).

Similarly, the share of students in schools where achievement data are tracked over time by an administrative authority did not change between 2006 and 2018, on average across OECD countries (in both cycles it was 67%) (Figure V.8.5). In 19 countries and economies, the share of students in schools where achievement data are tracked over time by an administrative authority increased (Table V.B1.8.7). The largest increases were observed in Denmark, Indonesia, Norway and Chinese Taipei. In 10 countries and economies, the share of students in schools where achievement data are tracked over time by an administrative authority shrank over the period. The largest decreases were observed in Finland, Iceland and Luxembourg.

PISA 2006 did not collect information about schools providing data to parents.

PISA also collected data on school accountability in 2015. All three forms of school accountability were less prevalent in 2018 than in 2015, on average across OECD countries. The share of students in schools that post achievement data publicly was five percentage points smaller in 2018 than in 2015, on average across OECD countries. In Argentina, Bulgaria, Croatia, Greece, Hong Kong (China), Iceland and Latvia, the share of students enrolled in schools that post data publicly decreased by ten percentage points or more; in Korea and Luxembourg, the shares shrank by 20 and 21 percentage points, respectively (Table V.B1.8.7). By contrast, in Kosovo, Macao (China), Malta, Montenegro, Slovenia and Chinese Taipei, the share of students in schools that post achievement data publicly increased by five percentage points or more during this period.

A majority of students were in schools that use at least one of the three forms of school accountability: providing achievement data to parents directly, posting achievement data publicly, and having achievement data tracked over time by an administrative authority (Table V.B1.8.6). Across OECD countries, on average, only 5% of students were in schools whose principal reported that none of these three forms is used. While less than 5% of students in more than 60 countries and economies attended such schools, more than 20% of students in Austria, Finland and Germany did. In contrast, 26% of students were in schools whose principal reported that all three forms of school accountability are used. More than 50% of students in Kazakhstan, New Zealand, Saudi Arabia, Thailand, Turkey, the United Kingdom, the United States and Viet Nam attended such schools.

On average across OECD countries, students enrolled in schools that post achievement data publicly scored better in reading (by 13 points) than students in schools that do not post data publicly. Yet, socio-economically advantaged schools were more likely than disadvantaged schools to post achievement data publicly. After accounting for students’ and schools’ socio-economic profile, students in schools that post achievement data publicly still scored five points higher in reading, on average across OECD countries (Figure V.8.6). At the system level, however, the incidence of posting achievement data publicly was not correlated with mean performance in PISA, nor with equity in performance, as described in detail later in this chapter.

The relationship between student achievement and other forms of school accountability was weak after accounting for students’ and schools’ socio-economic profile. After accounting for students’ and schools’ socio-economic profile, in nine countries and economies, students in schools where achievement data is tracked over time by an administrative authority scored better in reading; in two countries, they scored worse (Table V.B1.8.9).

Similarly, after accounting for students’ and schools’ socio-economic profile, in six countries, students in schools that provide achievement data to parents directly scored better in reading, but in three countries they scored worse (Table V.B1.8.10).

Seeking written feedback from students

One way that schools evaluate themselves is by seeking written feedback from students. On average across OECD countries, 68% of students were in schools whose principal reported that the school seeks written feedback from students regarding their lessons, teachers or resources. This practice is typically based on the school’s own initiative (OECD average = 56%) rather than being mandatory (12%) (Table V.B1.8.11).

Students in schools that seek feedback from students performed better in reading than students in schools that do not, on average across OECD countries and in 16 countries. Yet, the association between student feedback and student performance is confounded by the fact that, in many education systems, socio-economically advantaged schools tend to seek feedback from their students more than disadvantaged schools do. After accounting for students’ and schools’ socio-economic profile, seeking feedback from students is still associated with better reading performance, on average across OECD countries and in nine countries and economies. The largest performance differences (at least 25 score points) were observed in B-S-J-Z (China), Macao (China), Qatar, Singapore and the United Arab Emirates.

Teacher mentoring

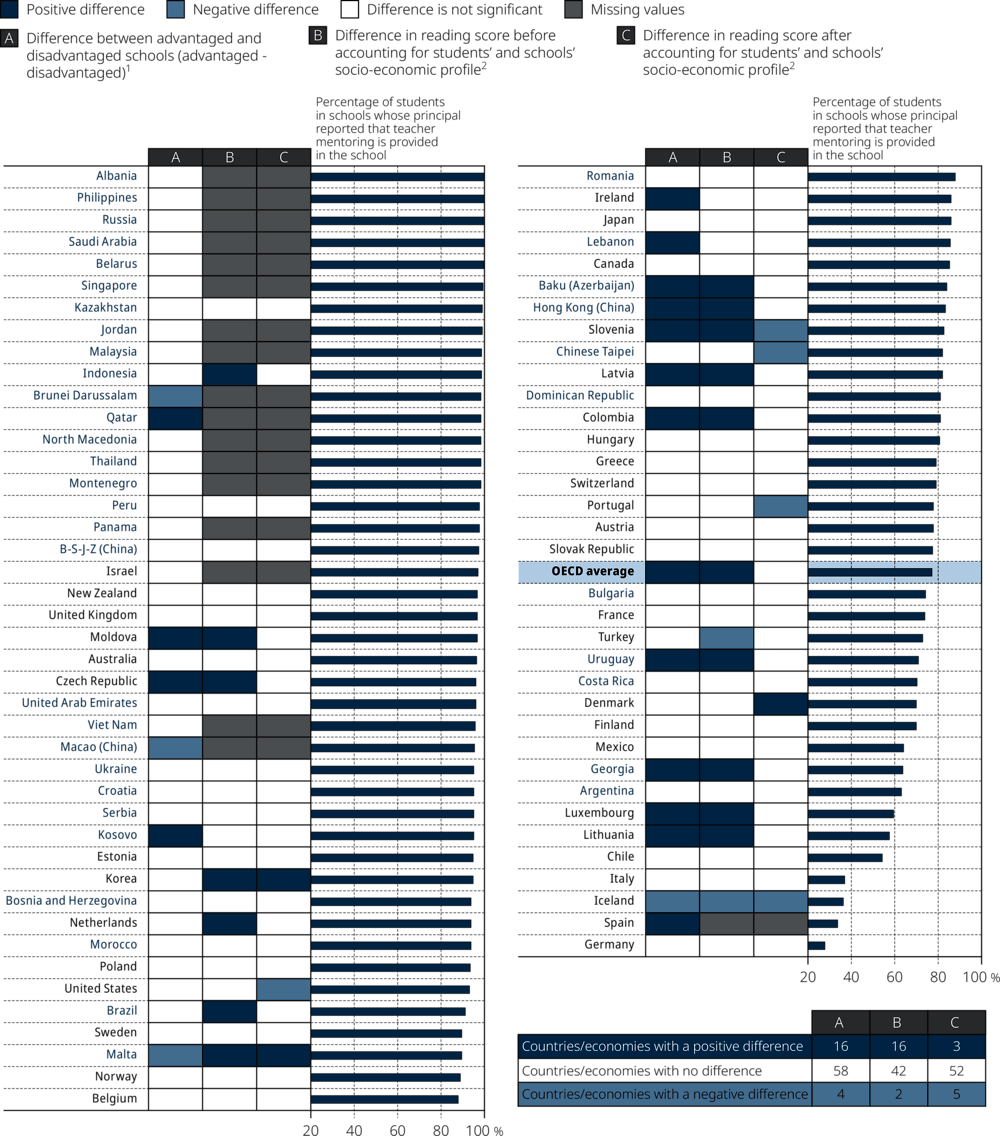

On average across OECD countries, 77% of students were enrolled in schools whose principal reported that teacher mentoring is provided in their school. In 62 countries and economies, more than three out of four students were enrolled in schools where teacher mentoring is provided. Teacher mentoring was the least prevalent in Germany, Iceland, Italy and Spain.

On average across OECD countries, teacher mentoring was typically based on the school’s own initiative (61% of students were enrolled in such schools), rather than being mandatory (17%). In B-S-J-Z (China), the Czech Republic, Estonia, the Netherlands, Poland and the United Kingdom, at least 85% of students were enrolled in schools whose principal reported that teacher mentoring in their school is provided on the school’s initiative. The countries where the availability of mandatory teacher mentoring was the greatest were North Macedonia (84% of students attended such a school), Serbia (70%), Saudi Arabia (69%) and Qatar (66%) (Table V.B1.8.11).

Students in schools where teacher mentoring is provided performed better in reading than students enrolled in schools where no mentoring is provided, on average across OECD countries (a 7 score-point difference), and in 16 countries and economies. Yet, the association between teacher mentoring and student performance is confounded by the fact that, in many education systems, the availability of teacher mentoring is greater in socio-economically advantaged than in disadvantaged schools.

This section examines how various policies and practices on evaluation and assessment are related to education outcomes at the system level. As shown in Figure V.8.9, two education outcomes are considered: mean performance in reading and equity in reading performance.

At the system level, countries and economies tended to show greater equity in education when they use student assessments to inform parents about their child’s progress (Figure V.8.10). Across OECD countries, after accounting for per capita GDP, the higher the percentage of students in schools that use student assessments to inform parents about their child’s progress, the weaker the relationship between students’ socio-economic status and their reading performance. After accounting for per capita GDP, these correlations are statistically significant in reading, mathematics and science both across OECD countries, and across all countries and economies.1

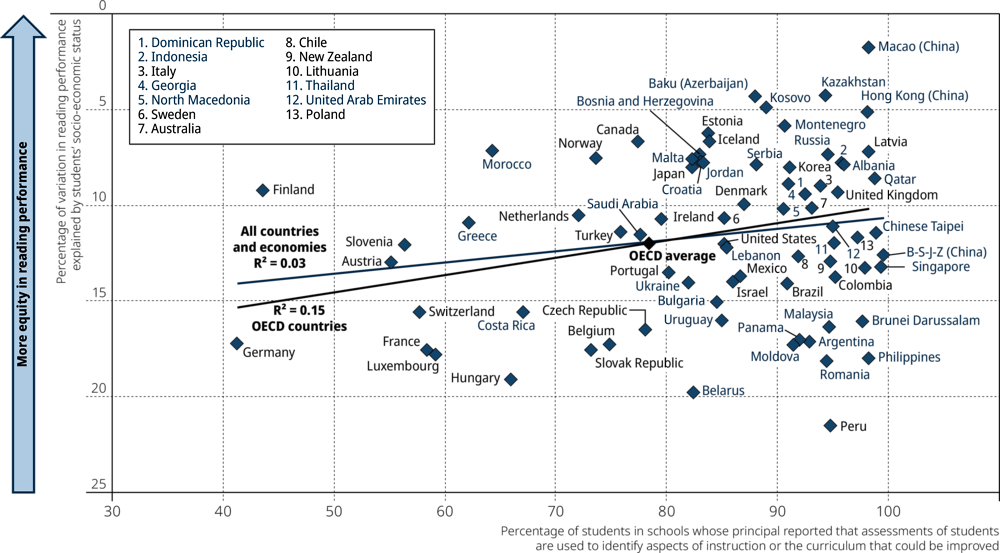

At the system level, countries and economies tended to have better equity in education when they use student assessments to identify aspects of instruction or the curriculum that could be improved (Figure V.8.11). Across OECD countries, after accounting for per capita GDP, the percentage of students in schools that use student assessments to identify aspects of instruction or the curriculum that could be improved was correlated with better equity in performance in reading (partial r = 0.41), mathematics (partial r = 0.40) and science (partial r = 0.45) (Table V.B1.8.16). Across all countries and economies, these correlations were statistically significant after accounting for per capita GDP.2

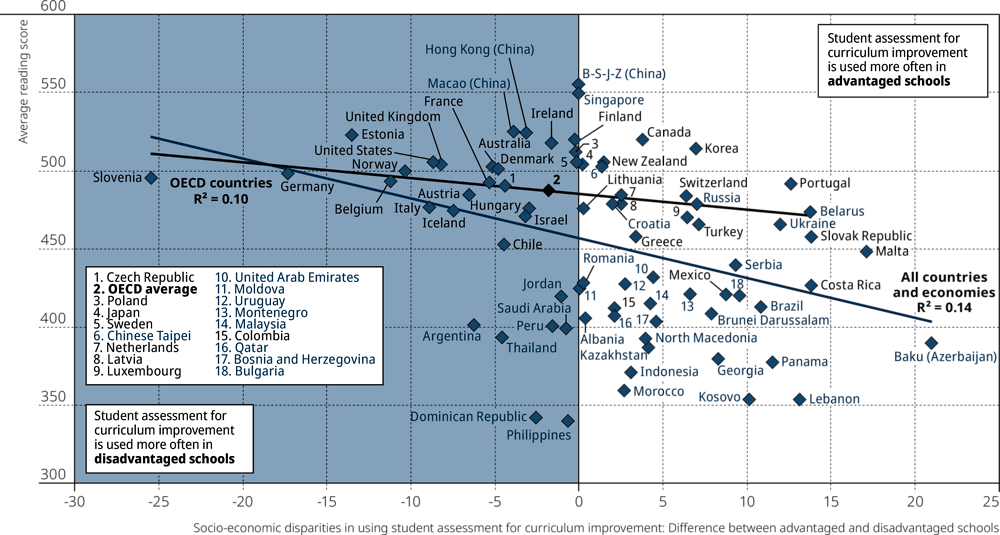

There was no clear pattern in the relationship between reading performance and the prevalence of using student assessments to identify aspects of instruction and the curriculum that could be improved. However, the disparity, related to socio-economic status, in the use of student assessments for this purpose was associated with reading performance. In high-performing education systems, the prevalence of using student assessments for this purpose was similar in socio-economically advantaged and disadvantaged schools or, in some cases, it was more prevalent amongst disadvantaged schools than advantaged schools (Figure V.8.12). For example, in Slovenia, 73% of students in disadvantaged schools attended a school where student assessments are used to identify aspects of instruction and the curriculum that could be improved, while 48% of students in advantaged schools attended such schools. In contrast, in Baku (Azerbaijan), 77% of students in disadvantaged schools attended a school where student assessments are used to identify aspects of instruction and the curriculum that could be improved, while 98% of students in advantaged schools attended such schools (Table V.B1.8.2).

To consider the issue of quality assurance and improvement actions at school, school principals were asked to report whether there are written specifications for student performance at the school and, if so, whether they are mandatory (e.g. based on district or ministry policies) or on the school’s initiative. Figure V.8.13 shows that, at the system level, countries and economies tended to show greater equity in education when more students were in schools that have written specifications for student performance based on the school’s initiative.3

The origin of such written specifications for student performance had a distinct relationship with education outcomes. When written specifications for student performance are mandatory, no clear relationship with equity was observed. However, across all countries and economies, there was a negative relationship with performance in reading, mathematics and science (Table V.B1.8.16). The relationships were significantly negative even after accounting for per capita GDP. This means that lower-performing education systems tended to have more students in schools that have mandatory written specifications for student performance. This could be interpreted to mean that in order to mitigate low performance, district or ministry policies are implemented to set mandatory specifications for student performance.

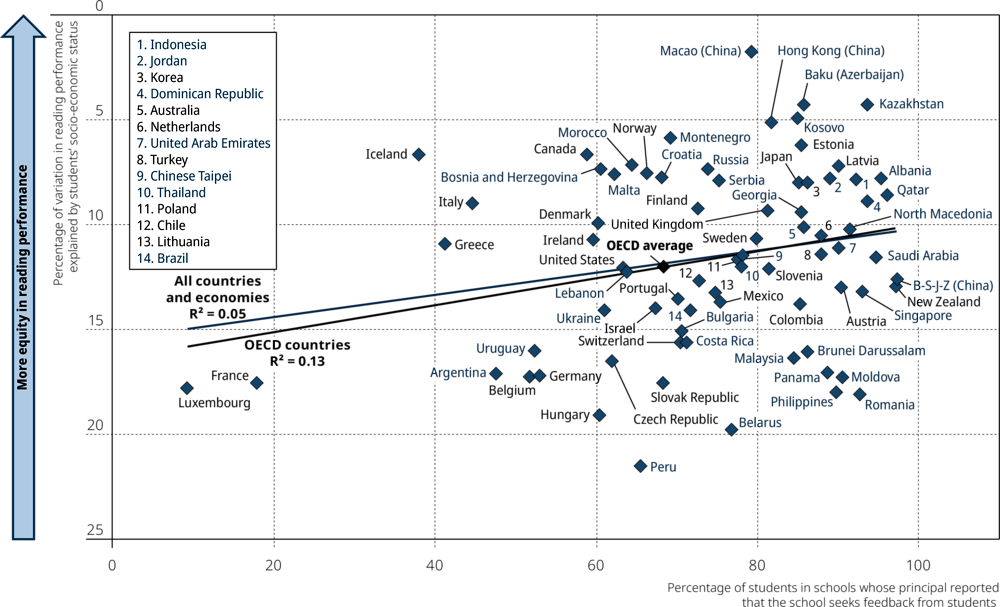

Another issue regarding quality assurance and improvement actions at school related to equity in performance is whether schools seek written feedback from students (e.g. regarding lessons, teachers or resources). Figure V.8.14 shows that, at the system level, equity in student performance tended to be greater in countries and economies with a higher percentage of students in schools whose principal reported that their school seeks feedback from students (regardless whether such feedback is mandatory or on the school’s initiative).4 Over 12% of the variation in equity in reading performance across OECD countries could be accounted for by differences in the prevalence of schools seeking feedback from students. The correlation between the percentage of students in schools seeking feedback from students and equity in reading, mathematics and science performance was statistically significant, even after accounting for per capita GDP, across OECD countries, and across all countries and economies (Table V.B1.8.16).

At the system level, mean student performance tended to be higher in countries and economies with a larger share of students in schools whose principal reported that teacher mentoring is provided on the school’s initiative. Some 17% of the variation in mean reading performance across all PISA-participating countries and economies could be accounted for by differences in the prevalence of teacher mentoring at the school’s initiative (Figure V.8.15). The correlation coefficients between the percentage of students in schools with teacher mentoring on the school’s initiative and mean performance in each of the three core PISA subjects – reading, mathematics and science – were positive and statistically significant, even after accounting for per capita GDP, across OECD countries, and across all countries and economies (partial r coefficients ranging between 0.37 and 0.45) (Table V.B1.8.16).

When examining all countries and economies, the origin of providing teacher mentoring had a distinct relationship with performance. While mandatory teacher mentoring was negatively related to performance, teacher mentoring on the school’s initiative was positively correlated with performance across all countries and economies. This difference was not observed across OECD countries.

References

[8] Förster, C. et al. (2017), El poder de la evaluación en el aula.

[6] Hattie, J. and H. Timperley (2007), “The Power of Feedback”, Review of Educational Research, doi: 10.3102/003465430298487, pp. 81-112, https://doi.org/10.3102/003465430298487.

[3] Lesaux, N. (2006), “Meeting Expectations? An Empirical Investigation of a Standards-Based Assessment of Reading Comprehension”, Educational Evaluation and Policy Analysis - EDUC EVAL POLICY ANAL, Vol. 28, pp. 315-333, https://doi.org/10.3102/01623737028004315.

[4] Looney, J. (2011), “Alignment in Complex Education Systems: Achieving Balance and Coherence”, OECD Education Working Papers, No. 64, OECD Publishing, Paris, https://dx.doi.org/10.1787/5kg3vg5lx8r8-en.

[1] OECD (2013), Synergies for Better Learning: An International Perspective on Evaluation and Assessment, OECD Reviews of Evaluation and Assessment in Education, OECD Publishing, Paris, https://dx.doi.org/10.1787/9789264190658-en.

[7] OECD (2013), “The evaluation and assessment framework: Embracing a holistic approach”, in Synergies for Better Learning: An International Perspective on Evaluation and Assessment, OECD Publishing, Paris, https://dx.doi.org/10.1787/9789264190658-6-en.

[2] Rosenkvist, M. (2010), “Using Student Test Results for Accountability and Improvement: A Literature Review”, OECD Education Working Papers, No. 54, OECD Publishing, Paris, https://dx.doi.org/10.1787/5km4htwzbv30-en.

[5] Shepard, L. (2000), “The Role of Assessment in a Learning Culture”, Educational Researcher, doi: 10.3102/0013189X029007004, pp. 4-14, https://doi.org/10.3102/0013189X029007004.

Notes

← 1. After excluding low-performing countries/economies (i.e. mean performance in reading lower than 413 points), the strength of the association across OECD countries remained almost unaltered (after exclusion, R2 = 0.29), whereas across all countries/economies, the association strengthened (after exclusion, R2 = 0.37).

← 2. After excluding low-performing countries/economies (i.e. mean performance in reading lower than 413 points), the strength of the association increased both across OECD countries (after exclusion, R2 = 0.42), and across all countries/economies (after exclusion, R2 = 0.30).

← 3. After excluding low-performing countries/economies (i.e. mean performance in reading lower than 413 points), the strength of the association increased both across OECD countries (after exclusion, R2 = 0.43), and across all countries/economies (after exclusion, R2 = 0.40).

← 4. After excluding low-performing countries/economies (i.e. mean performance in reading lower than 413 points), the strength of the association across OECD countries remained almost unaltered (after exclusion, R2 = 0.37), whereas across all countries/economies, the association was not significant.

![Figure V.8.9 [1/4]. Evaluation and assessment, and student performance and equity](images/eps/g08-09-01.png)

![Figure V.8.9 [2/4]. Evaluation and assessment, and student performance and equity](images/eps/g08-09-02.png)

![Figure V.8.9 [3/4]. Evaluation and assessment, and student performance and equity](images/eps/g08-09-03.png)

![Figure V.8.9 [4/4]. Evaluation and assessment, and student performance and equity](images/eps/g08-09-04.png)