9. Competence assessment in German vocational education and training

This chapter reviews the methods of competence assessment in German vocational education and training (VET) and discusses their suitability for assessing the capabilities of artificial intelligence (AI) and robotics. It describes how vocational competences are defined, the instruments used to assess them and how VET examinations are developed and administered. In addition, it discusses the validity and reliability of assessment instruments in VET, providing concrete examples of assessments. Finally, the chapter indicates the advantages of using VET tests for assessing AI capabilities and concludes with several considerations for applying VET examinations on machines.

Developments in artificial intelligence (AI) and robotics will have a substantial impact on future working environments. Technologies will partially or completely take over tasks previously performed by humans. Such developments will change the professional skills and competences required of future generations of workers.

Which professional tasks are likely to be partially or entirely performed by AI or robotics? How can AI and robotics be trained to perform these tasks? What methods and tools can be used to assess their performance, particularly in comparison with humans?

One approach to these questions is to review methods commonly used to train and assess competences in humans. These methods could then inform the training and assessment of AI and robotics. This chapter provides an overview of how competences are assessed in vocational education and training (VET) in Germany. It looks at how skills and competences are defined; methods and tools in use; and examples of tasks used in VET. Finally, it reflects on the suitability of these methods and tools to assess the capabilities of AI and robotics and offers recommendations.

Germany has 325 occupations that are state-recognised or deemed state-recognised under the Vocational Training Act (BBiG) or the Crafts Code (HwO) (BIBB, 2020[1]). Some occupations, such as in the medical field, are regulated in special laws (e.g. Nursing Act, Geriatric Care Act). Most vocational training programmes completed in Germany are based on the dual model (Baumgarten, 2020[2]). In these cases, the apprentices alternate between the vocational school and their respective training company with which they have made a contract for their training. In dual VET, framework curricula (Rahmenlehrpläne) regulate school-based vocational education. They provide a detailed overview over the learning fields and learning outcomes for each VET-occupation. In-company training is regulated by the Vocational Training Act (BMBF, 2019[3]) and the respective training regulations (Ausbildungsordnungen) for an occupation. Both the framework curricula and the training regulations are available for public inspection through the Standing Conference of the Ministers of Education and Cultural Affairs (KMK).

This chapter refers primarily to dual VET since it is the most common training model in Germany.1

Empirical and normative definitions of competence

The objective of VET is the acquisition of vocational competences. However, these vary on areas of application, and there is no uniform definition in VET (Koeppen et al., 2008[4]; Zlatkin-Troitschanskaia and Seidel, 2011[5]; Rüschoff, 2019[6]).This section briefly discusses definitions of vocational competences in different contexts to provide a framework for the illustrations of VET in Germany. A rough distinction can be made between empirical and normative definitions of competence.

Empirical definitions are applied particularly when competences are to be measured. In empirical contexts, vocational competences are frequently described as “context-specific cognitive performance dispositions, which in functional terms relate to situations and requirements in certain domains” (Klieme and Leutner, 2006[7]). Hence, they are context-specific dispositions for action (Weinert, 2001[8]; Klieme and Leutner, 2006[7]; Rüschoff, 2019[6]). Such approaches usually aim at developing standardised assessment instruments. These would capture individual facets or sub-dimensions of vocational competence. In so doing, they draw quantifying conclusions about the respective (sub-) competences of an individual, e.g. about specific knowledge or skills (Nickolaus, 2018[9]).

Normative definitions of competence are used mostly in political and legislative debates when it comes to general educational objectives. Normative definitions may go well beyond the immediate work context. Thus, they might also consider non-occupational (e.g. societal) contexts as possible areas to apply vocational competences (KMK, 1996, 2007, 2011, 2017[10]; Roth, 1971[11]). Such comprehensive definitions are almost impossible to capture empirically due to their extensive scope. However, they are not generally designed with the aim of empirical measurement. Rather, they serve as an orientation framework for policy making and the description of educational goals such as by the Standing Conference of the Ministers of Education and Cultural Affairs of the Länder in the Federal Republic of Germany (KMK, 1996, 2007, 2011, 2017[10]), the German Vocational Training Act (BMBF, 2019[3]), and the German Qualifications Framework for Lifelong Learning (DQR, 2011[12]; BMBF, 2013[13]).

This difference between normative and empirical definitions has an important implication. While normative approaches often describe rather broad competence dimensions, empirical approaches will break these dimensions down into sub-dimensions to make them accessible for measurement. This distinction becomes relevant in the sections that follow that present both the normative frameworks of vocational competence and examples of different instruments for assessing competence.

Dimensions of competence in vocational education and training

At the policy level, the dimensions and levels of competence in German VET are largely based on the German Qualifications Framework (DQR, 2011[12]; BMBF, 2013[13]). This, in turn, is based on the European Qualifications Framework (EQF) (Cedefop, 2020[14]).

The EQF offers a shared European reference framework to facilitate the recognition of qualifications across different countries and educational systems. It covers qualifications at all educational levels and in all education and training sub-systems. In so doing, it provides a comprehensive overview of the qualifications in the countries involved in its implementation.

When applied in individual countries, the EQF is implemented in National Qualifications Frameworks that consider country-specific contexts and characteristics (BMBF, 2013[13]). The German Qualifications Framework, for example, presents a general taxonomy of competence dimensions that applies at all educational levels. It distinguishes between Professional Competence (Knowledge and Skills) and Personal Competence (Social Competence and Autonomy). It further distinguishes between eight educational levels – from pre-vocational training at Level 1 to a doctoral degree at Level 8 (BMBF, 2013[13]; DQR, 2011[12]).

The general competence dimensions remain the same at all educational levels. However, the required learning outcomes increase. Thus, the complexity with which graduates at each level need to master the competences also increases. German VET is located either at Level 3 for occupations that require two years of training or at Level 4 for occupations that require 3-3.5 years of training (DQR, 2011[12]); (BMBF, 2013[13]).

Table 9.1 depicts the overarching competence dimensions along with the required mastery of these competences at Levels 3 and 4. Table 9.2 depicts an example of how these competence dimensions are applied to Industrial Electricians at Level 3 of the German Qualifications Framework. It also describes Industrial Electricians’ learning outcomes for each competence dimension (BMBF, 2013[13]).

Applications of competence dimensions in practice

Comprehensive vocational competence dimensions, such as those in the German Qualifications Framework, must be broken into smaller sub-competences, both general and occupation-specific, to make them accessible for empirical assessment. General competences encompass a broad spectrum of basic skills related to mathematics, reading and writing or general problem solving, among others (Weinert, 2001[8]; Klotz and Winther, 2016[15]). Occupation-specific competences are pertinent to a specific occupation or occupational area, for example, specific rules, principles and action patterns (Klotz and Winther, 2016[15]).

Rüschoff (2019[6]) conducted a systematic review on the methods of competence assessment in German VET. This included both instruments used within VET examinations and those developed for research. The results show that most available methods and tools, roughly 60% of the instruments covered in the review, are focused on the assessment of occupation-specific professional competences. These include commercial knowledge and skills in industrial clerks (Klotz and Winther, 2015[16]) or problem-solving skills (e.g. error analysis/troubleshooting) in car mechatronics (Abele et al., 2016[17]; Gschwendtner, Abele and Nickolaus, 2009[18]). Significantly fewer instruments, about 24%, were focused on general competences. Only 9% measured personal competences such as social or communicative competences (Rüschoff, 2019[6]).

Even if the professional competences and skills are clearly in the forefront, personal competences are equally relevant for most professions. The relative scarcity of instruments to assess social and communicative competences, then, does not indicate these competences are less important. Since these instruments are usually aligned with the framework curricula and training regulations, they typically emphasise professional aspects more than social and communicative aspects.

Yet, social and communicative skills will likely become more important for most professions, as employees will focus on more complex activities that require interdisciplinary exchange. This is especially the case with greater automation for routine tasks. The ability to communicate in a goal-oriented manner with professionals from a variety of backgrounds will hence be an important skill in the future.

Structure and grading of examinations

Final examinations in (dual) VET are regulated by the Vocational Training Act or the Crafts Code (BBiG, §37 – §50/ §31-§40a HwO) and organised by the chambers for the respective occupations. The chambers appoint examination boards (Prüfungsausschüsse) and conduct the examinations. Examination boards consist of at least three people who are qualified experts in the profession. They must also include representatives of employers and employees (in equal numbers) and at least one vocational schoolteacher. The examination board may also obtain qualified expert opinions from third parties (e.g. vocational schools).

The learning fields covered in the examinations are aligned with the framework curricula and training regulations for an occupation. Such an alignment and the explicit inclusion of occupational experts ensure that assessed competences and the occupational scenarios in the examinations correspond to industry practices and reflect workplace conditions.

Examinations are structured in different examination areas. They must cover between three to five areas with “economics and social studies” being obligatory. Each area defines competences that apprentices must demonstrate and specifies certain instruments to assess them. Examinations are commonly designed to yield a total of 100 points across all tasks and examination areas. A very good performance or grade 1.0 in the German system is awarded 100 points (BIBB, 2020[19]).

Aggregated results for all final examinations held by the Chamber of Industry and Commerce (roughly 300 000 annually) are available in their examination statistics. These statistics contain information on the examination areas of the individual occupations, the pass rate of the examinations and the distribution of grades and average scores for individual examination areas (IHK, 2021[20]).

Development of examination tasks

The examination tasks are developed by one of several entities:

a task development committee put in place for a certain profession by the responsible chambers

a committee appointed by a chamber for a specific occupation in a federal state or region to develop the examination tasks, often together with a (supra-regional) development office for examination tasks

development offices for examination questions, for example the PAL (www.stuttgart.ihk24.de/pal) for industrial and technical professions, the ZPA (www.ihk-zpa.de) or AkA (www.ihk-aka.de) for commercial professions and the ZFA (www.zfamedien.de) for professions in the printing and media industry.

Development offices develop the examination tasks and carry out the examinations for a variety of different occupations. For example, the PAL develops examination tasks for 133 of the currently 325 recognised occupations in German VET (PAL, 2020[21]).

The development offices usually hold the copyright and the exploitation rights to the examination tasks. For examination tasks still in use, secrecy regulations are strict. Examination tasks stemming from previous years can in some cases be acquired for inspection or exam preparation from the development offices.

Types of examination tasks

Table 9.3 provides an overview of the different types of tasks. Tasks can be written, oral or practical, and either closed (e.g. written multiple choice) or open (e.g. practical work assignments). The respective examination board decides which tasks are used; not all types of tasks are used in every examination. However, certain tasks are related in that the content of one requires the completion of a previous one. This is because many examinations are case-related. They have different tasks but refer to the same underlying work-related scenario and the results build on one another.

The case-related approach makes it possible to depict complete action sequences. This, in turn, permits a more representative picture of the apprentices’ competences and skills in authentic work settings. For instance, apprentices might first complete a practical work assignment and then present and discuss the results. Table 9.4 depicts common and obligatory combinations of tasks.

Psychometric properties of assessment instruments in vocational education and training

Regarding the psychometric quality of these examination tasks, one of the advantages lies in their validity. The validity of an assessment refers to the extent to which a method or instrument can depict the intended target construct (e.g. vocational competence). This can be established in a variety of ways. For example, content validity addresses how well the tasks of a test cover the range of all possible tasks. Hence, it addresses how well the test represents the underlying construct to be assessed. The examinations aim to ensure content validity in two ways. First, they align examinations to the framework curricula and training regulations. Second, they draw on experts in the field develop and select tasks to reflect the behaviour and knowledge needed by apprentices in their occupation.

A recent systematic review provides a comprehensive overview of the psychometric properties of the methods and instruments developed or in use in German VET (Rüschoff, 2019[6]), both in the context of VET examinations and in research contexts. Most instruments included in the review ensured the validity of the instruments through establishment of their content validity. They did this, for instance, by aligning the instruments with curricula and training regulations and drawing on expert ratings from the industry.

Roughly 22% of the instruments were further subjected to a construct validation, primarily through one of two methods. On the one hand, their convergent validity was assessed (i.e. the relationship of the instrument to other instruments measuring the same construct). On the other, their discriminant validity was assessed (i.e. ensuring the developed instrument does not correlate [strongly] with validated instruments measuring different constructs). The review identified a scarcity of results on the predictive validity of the instruments. It is unclear whether findings regarding the predictive validity are entirely lacking or just not publicly available. Most instruments in the review showed good or acceptable reliability (Rüschoff, 2019[6]).

An analysis of the psychometric properties of the final examinations in commercial professions in German VET studied the final examinations of 1768 apprentices (Klotz and Winther, 2012[23]; Winther and Klotz, 2013[24]). The results suggest the examinations are clearly aligned with the requirements of an occupation. They also align with the practical training apprentices received before their examination.

However, the relative weights of the examination areas sometimes deviate. For instance, a large part of the curriculum in commercial professions refers to the goods and services domain (roughly half of the curriculum and one-third of the practical training). Yet, only about 20% of the examination relates to this content (Winther, 2011[25]; Winther and Klotz, 2013[26]).

The analyses also indicated that reliabilities of the tasks used in commercial final examinations are sufficiently high. However, reliabilities differed by apprentices’ ability levels. The examinations were especially reliable for test takers with average ability levels (with scores centred around the mean). They were somewhat less reliable for apprentices with either very high or very low ability levels (Winther and Klotz, 2013[26]). More detailed information on the psychometric properties of the examinations for individual professions is available to the respective chambers and is considered when tasks are to be reused.

Overall structure of the examination

The following examples provide an overview of the tasks used in an examination of Advanced Manufacturing Technicians at Level 4 of the German Qualifications Framework. The examples show excerpts of an examination held in the summer of 2017. All displayed tasks stem from the same examination. The tasks displayed were developed in Germany by the PAL (PAL, 2020[27]) and translated by the German American Chamber of Commerce of the Midwest (GACCMidwest, 2020[28]). To facilitate communication with an international readership, only the English translation is shown here.

The examination has a practical part, an oral part and a written part. As is common, the examination is case-related, meaning the different parts revolve around a typical professional scenario. In this case, they involve the construction and use of a disk separator. When completing the tasks, apprentices may use a mechanical and metal trades handbook, a collection of formulas, drawing tools and a non-programmable calculator with no communication with third parties. They are further provided with a set of technical drawings. Figure 9.1 depicts one of seven technical drawings that were part of the examination materials.

Practical tasks

In the practical component of the examination, apprentices demonstrate professional skills through the completion of a sequence of interrelated tasks (e.g. producing a functional module of a disk separator). Apprentices are provided with a functional description of the module to be produced, the setup requirements and the necessary drawings. They have 6.5 hours to complete the assignment. Their performance is evaluated according to pre-determined evaluation criteria on every sub-task. Figure 9.2 shows an excerpt of the assignment as presented to apprentices during the examination.

Oral tasks

The oral part of the examination consists of situational discussions. During the practical assignment (i.e. while producing a functional module of a disk separator), apprentices will be asked questions related to the completion of the assignment. They are expected to provide short answers that show they can explain professional issues and hold situational expert discussions.

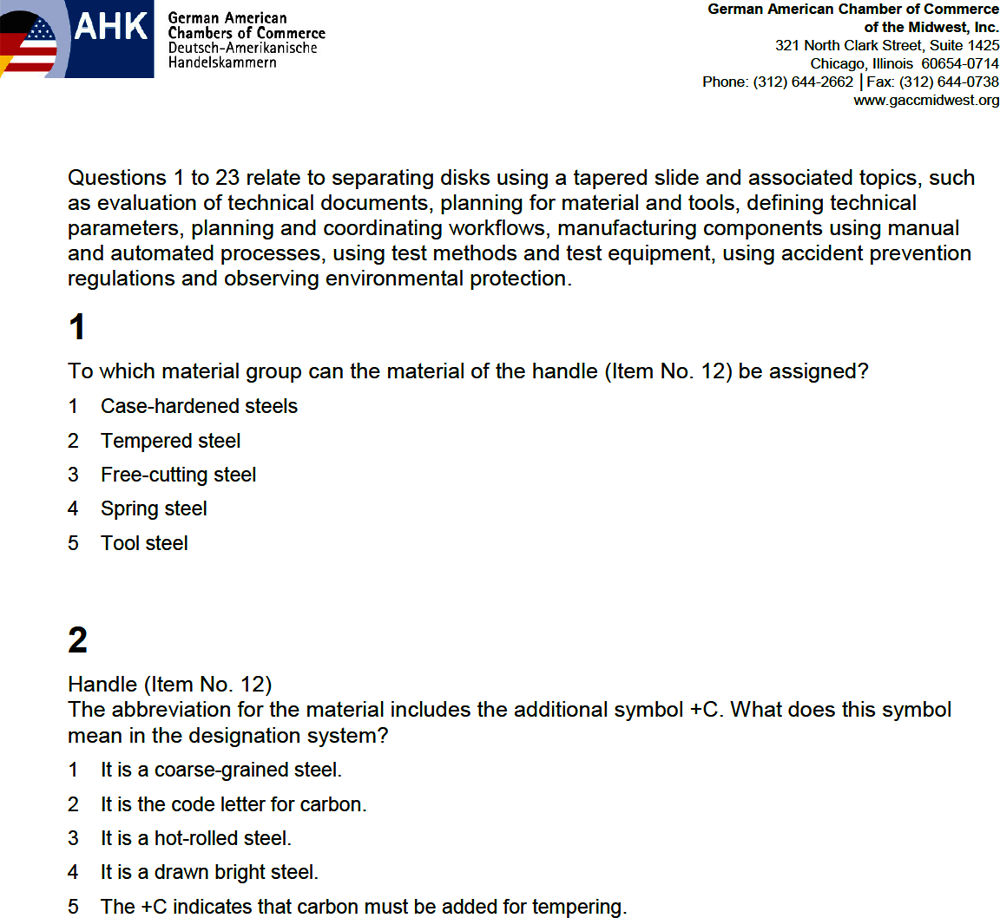

Written tasks

The written part of the examination has two parts. Part A consists of 23 multiple-choice questions of which 6 are mandatory and 3 are optional. Apprentices can decide which three questions they wish to skip. Part B consists of eight short open questions that all need to be answered. Both parts A and B refer to the technical drawings provided beforehand (see Figure 9.1). Apprentices have 90 minutes to complete the two parts. Figure 9.3 provides examples of multiple-choice questions in part A. Figure 9.4 provides examples of short open questions in part B.

Evaluation and scoring of the results

The practical assignment is scored in three areas:

performance (testing the function of the model, control technology, visual inspection of the mechanical module and dimensional inspection)

the oral situational discussion (which is deemed part of the work assignment as it is held during the assignment).

Each of the three areas can yield a maximum of 100 points (with points already awarded and weighted in intermediate steps). In the final evaluation of the work assignment, a maximum of 100 points can be attained. The breakdown of points awarded is 85% for the performance phase; 10% for the test protocol; and 5% for the discussion phase. In the written part of the examination, each multiple-choice question yields 1 point, resulting in a maximum number of 20 points for part A (20 of 23 multiple-choice questions must be answered). Part B consists of eight short-answer questions that must all be answered. Each short-answer question yields 10 points, resulting in a maximum number of 80 points for part B. The divisors of parts A and B are 0.4 and 1.6, respectively (i.e. a maximum of 20/0.4 = 50 points for part A and a maximum of 80/1.6 = 50 points for part B). This gives parts A and B each 50 points or a weighting of 50% each. Together, apprentices can get 100 points for the written part of the examination. The practical and written parts of the examination each count for half of the final grade.

Advantages of performance-based assessments

The above review of the psychometric properties of the examination tasks in VET suggests these tasks are suitable for assessing vocational competences in humans. Are they equally suitable to assess vocational competences in machines? Addressing this question requires first understanding what the tasks reviewed above do and do not measure and how they differ from other assessment methods and instruments.

The tasks presented above are action-oriented and inherently practical, which are advantages. With work-related tasks, examiners can observe performance on the same behaviours (or very similar behaviours) that apprentices will need to demonstrate in their subsequent professions. Performance on these tasks is thus likely a direct predictor of later job performance.

Although these tasks draw on observable task-related behaviour (e.g. crafting a certain product), they indicate more than task performance. The examinations aim to use action-oriented tasks to draw inferences on an underlying characteristic of the apprentices such as the competences (e.g. knowledge or skills) that enable apprentices to exert this task-related behaviour. Yet, in action-oriented examinations, the tasks themselves already have considerable meaning. They are not only abstract indicators of the underlying construct but also demonstrate the target behaviour. This distinguishes the action-oriented tasks used in VET from other tests seeking to assess underlying characteristics or constructs such as tests of general mental ability. Tests of general mental ability are likewise used to predict job performance and occupational attainments in humans (Schmidt and Hunter, 2004[29]; Bertua, Anderson and Salgado, 2005[30]). However, they do not draw on work-related tasks to make inferences about future professional performance. Rather, they aim to assess general mental abilities that are expected to manifest in various domains, including future job performance. For example, individuals who score high on a general mental ability test have proven to be able to solve complex test items (observable behaviour). This observable behaviour is theoretically related to the concept of general mental ability. This, in turn, is assumed to relate to performance on other complex problem-solving tasks in real-life situations.

Advantages of performance-based assessments in artificial intelligence and robotics

The results that hold for humans may not apply in the assessment of the capabilities of AI and robotics. Questions in a general mental ability test are only indicators of general intelligence. The resulting score, then, is not an end in itself. For example, individuals will likely attain a high score on a general mental ability test if they memorise the answers first. However, this score will not measure underlying general mental ability. Rather, it will represent their ability to memorise answers to a test. As the result would no longer measure general intelligence, it would also not predict outcomes beyond the immediate test. Hence, it would be unrelated to outcomes usually associated with higher general mental ability.

In humans, the practice described above would be considered cheating on a test. When training an AI, algorithms are trained by knowing the answers in advance, at least for specialised/weak AI. Through repeated exposures to certain tasks or challenges, the AI is trained to solve tasks with increasing accuracy and efficiency. As a result, the AI’s performance will be a measure of its ability to learn the answers (or answering logics) to this type of test rather than of the presumed underlying characteristics (e.g. mental ability). Like an individual learning the answers to a test by heart, the resulting score will not be a measure of the intended underlying construct. Therefore, it will most likely no longer predict other outcomes typically associated with general mental ability because such measures gain their predictive value through links with other complex problem-solving skills.

In humans, research has consistently demonstrated these links. However, to successfully apply their general mental ability to other problem-solving tasks, humans likely also draw on other sources of information. These could be basic knowledge about material objects and their properties, events and their effects, or beliefs and desires – what computer science calls “common sense knowledge” (McCarthy, 1989[31]; Miller, 2019[32]). Whereas it can be assumed that humans possess this common sense knowledge, this is not (yet) the case in AI. Consequently, an AI trained on such tests might only be able to solve the questions on this type of test without allowing for inferences about their (job) performance beyond the tests.

The tasks used in VET assess apprentices’ underlying competences through authentic professional tasks and requirements. In professional contexts, such work-related tasks are widely recognised as diagnostic instruments and are used successfully for assessment and selection (Hunter and Hunter, 1984[33]; Roth, Bobko and McFarland, 2005[34]; Lievens and Patterson, 2011[35]). Although practical work-related tasks also intend to infer underlying competences from task performance, the tasks themselves are directly related to vocation-specific requirements. In this way, they assess not only underlying competences but also vocation-specific task performance.

Thus, training an AI to learn and reproduce specific procedures to complete a professional task or to solve a work-related problem would train it to perform vocation-specific tasks efficiently. However, performance on one task cannot or can hardly be generalised to performance on a different task or an unknown context. The generalisability of measures of this type of task performance are thus narrow. Still, it would be certain that a person (or AI) can successfully complete this specific task.

Instruments used in German VET offer several advantages that could be useful for training and assessing the capabilities of AI and robotics:

Measurements used in VET are aligned with the framework curricula and training regulations, and fall under the responsibility of the respective chambers. They are thus developed together with industry and reflect authentic labour market requirements.

Measurements used in VET are performance-based. Performance-based measures capture competences through concrete professional behaviours. The assessed target behaviour has immediate relevance to exercise of the profession.

Competence assessments draw strongly on psychometric procedures and aim to capture underlying competences. However, assessing performance on concrete vocational tasks relies far less on theoretical assumptions regarding underlying psychological constructs than other assessment approaches. Thus, in performance-based assessments, a “successfully completed” task is meaningful in itself and there is no need to draw inference on any underlying competences that enabled its completion.

The respective chambers constantly develop and evaluate examination tasks through ongoing examinations. Hence, they exist for a large number of different occupations.

In sum, training and assessing AI and robotics based on concrete work-related tasks might yield scientifically interesting results. Moreover, due to the strong practical orientation of the tasks, it can provide relevant insights for the industry when anticipating the capabilities of AI and robotics. Industry can then change the educational and skill requirements of their future workforce accordingly.

When seeking to select tasks to train and assess the capabilities of AI and robotics, the following points should be considered in this order of sequence:

In German VET, there are different responsible authorities and regulations for different types of occupations and training. It is advisable to start with occupations that fall under similar legislation, so that the requirements for the occupations and the examination structures used are largely comparable. The majority of occupations are regulated by the Vocational Training Act (BBiG) or the Crafts Code (HwO).

Choose both industrial-technical and commercial professions, as well as craft trades and possibly health professions to represent as broad a spectrum of the working world as possible. Within these areas, a variety of occupations should be chosen that require different competences.

The objective is to explore the future role of AI and robotics in the labour market. The most meaningful and relevant information will therefore be obtained by looking at occupations prevalent in today’s labour market. In addition, access to testing instruments will be easier for more common occupations than for less common ones. Statistics on the number of apprentices per occupation and year are publicly available in Germany (BIBB, 2019[36]).

Against the background of representing a broad spectrum of the working world, other occupations with more diverse competence requirements can be selected if there is too much overlap between the most common occupations.

Initially, one competence domain (e.g. skills or knowledge) can be chosen as a starting point. Ultimately, all relevant domains (i.e. skills, knowledge, social competences and autonomy) should be covered to give a comprehensive picture of AI and robotics capabilities.

All types of tasks (i.e. practical tasks, written tasks and oral tasks) should be covered to gain a comprehensive picture of the capabilities of AI and robotics. Initially, only one type of task could be chosen as a starting point. The different types of tasks in the assessment of relevant competences are complementary. Consequently, all types of tasks should ultimately be considered to provide a comprehensive impression of the capabilities of AI and robotics.

As the last step, individual tasks to be used must be selected. The chambers, or the bodies commissioned by the chambers to develop the examination tasks, hold the rights to the examination materials. Therefore, materials should be obtained through the chambers.

As some occupations stemming from the same occupational areas are also somewhat similar, they may overlap in their test instruments (e.g. in different sales occupations or different types of mechanic occupations). In such cases, tasks used across occupations within a field should be prioritised.

References

[17] Abele, S. et al. (2016), “Berufsfachliche Kompetenzen von Kfz-Mechatronikern – Messverfahren, Kompetenzdimensionen und erzielte Leistungen (KOKO Kfz) [“Occupational Competencies of Mechatronics Technicians – Measurement, Dimensions and Achievement Goals”]”, Technologiebasierte Kompetenzmessung in der beruflichen Bildung: Ergebnisse aus der BMBF-Förderinitiative ASCOT, Bertelsmann.

[2] Baumgarten, C. (2020), Vocational Education and Training: Overview of the Professional Qualification Opportunites in Germany, German Office for International Cooperation in Vocational Education and Training, Bonn, https://www.govet.international/dokumente/pdf/vocational_education_and_training_web.pdf.

[30] Bertua, C., N. Anderson and J. Salgado (2005), “The predictive validity of cognitive ability tests: A UK meta-analysis”, Journal of Occupational and Organizational Psychology, Vol. 3, pp. 387-409, https://doi.org/10.1348/096317905X26994.

[19] BIBB (2020), Richtlinie des Hauptausschusses des Bundesinstituts für Berufsbildung: Musterprüfungsordnung für die Durchführung von Abschluss- und Umschulungsprüfungen, ["Guidelines of the Committee of the Federal Institute for Vocational Education and Training: Recommended Regulations for Final Exams and Exams for Entering Retraining"], Bundesanzeiger Amtlicher Teil (BAnz AT 21.12.2020 S3), https://www.bibb.de/dokumente/pdf/HA120.pdf.

[1] BIBB (2020), Verzeichnis der anerkannten Ausbildungsberufe 2020, ["Directory of Recognised Occupations in Vocational Education and Training"], https://www.bibb.de/veroeffentlichungen/de/publication/show/16754.

[36] BIBB (2019), Rangliste 2019 der Ausbildungsberufe nach Anzahl der Neuabschlüsse, ["Ranking of Apprenticeships sccording to the Number of New Degrees 2019"], https://www.bibb.de/de/103962.php.

[22] BIBB (2013), Empfehlung des Hauptausschusses des Bundesinstituts für Berufsbildung (BIBB) zur Struktur und Gestaltung von Ausbildungsordnungen (HA 158), ["Recommendations of the Committee of the Federal Institute for Vocational Education and Training for the Structure and Design and Training Regulation Frameworks"], Bundesanzeiger Amtlicher Teil (BAnz AT 13.01.2014 S1), http://www.bibb.de/de/32327.htm.

[3] BMBF (2019), Berufsbildungsgesetz - BBiG (The new Vocational Training Act), Bundesministerium für Bildung und Forschung/Federal Ministry of Education and Research, Bonn, https://www.bmbf.de/upload_filestore/pub/The_new_Vocational_Training_Act.pdf.

[37] BMBF (2019), Report on Vocational Education and Training 2019, Bundesministerium für Bildung und Forschung/Federal Ministry of Education and Research, Bonn, https://www.bmbf.de/upload_filestore/pub/Berufsbildungsbericht_2019_englisch.pdf.

[13] BMBF (2013), Deutscher Qualifikationsrahmen für lebenslanges Lernen [German EQR Referencing Report], Bundesministerium für Bildung und Forschung/Federal Ministry of Education and Research, Bonn, https://www.dqr.de/media/content/German_EQF_Referencing_Report.pdf (accessed on 24 August 2020).

[14] Cedefop (2020), “European qualifications framework. Initial vocational education and training: Focus on qualifications at levels 3 and 4”, Research Paper, No. 77, Publications Office of the European Union, Luxembourg, http://data.europa.eu/doi/10.2801/114528.

[12] DQR (2011), Original Title in German [The German Qualifications Framework for Lifelong Learning adopted by the “German Qualifications Framework Working Group“], Willkommen auf der Internetseite zum Deutschen Qualifikationsrahmen, http://www.dqr.de/media/content/The_German_Qualifications_Framework_for_Lifelong_Learning.pdf (accessed on 24 August 2020).

[28] GACCMidwest (2020), “German American Chamber of Commerce in the Midwest”, webpage, https://www.gaccmidwest.org/ (accessed on 8 September 2020).

[18] Gschwendtner, T., S. Abele and R. Nickolaus (2009), “Computersimulierte Arbeitsproben: Eine Validierungsstudie am Beispiel der Fehlerdiagnoseleistungen von Kfz-Mechatronikern [Computer-simulated Work Samples: A Validation Study using the Example of Failure Detection by Mechatronics Technicians]”, Zeitschrift für Berufs- und Wirtschaftspädagogik, Vol. 4, pp. 557–578.

[33] Hunter, J. and R. Hunter (1984), “Validity and utility of alternative predictors of job performance”, Psychological Bulletin, Vol. 96/1, pp. 72–98, https://doi.org/10.1037/0033-2909.96.1.72.

[20] IHK (2021), Prüfungsstatistik der Industrie- und Handelskammer, http://pes.ihk.de/Berufsauswahl.cfm.

[7] Klieme, E. and D. Leutner (2006), “Kompetenzmodelle zur Erfassung individueller Lernergebnisse und zur Bilanzierung von Bildungsprozessen. Beschreibung eines neu eingerichteten Schwerpunktprogramms der DFG”, Zeitschrift für Pädagogik, ["Competence Models for Measuring Individual Learning Outcomes and Educational Processes. Description of a Programme of the German Research Foundation"], pp. 876–903.

[15] Klotz, V. and E. Winther (2016), “Zur Entwicklung domänenverbundenerund domänenspezifischer Kompetenz im Ausbildungsverlauf: Eine Analyse für die kaufmännische Domäne”, Zeitschrift für Erzeihungswissenschaft, ["On the Development of Domain-related and Domain-specific Competences in Vocational Education. Analysis in the Commercial Domain"], pp. 765-782, https://doi.org/10.1007/s11618-016-0687-1.

[16] Klotz, V. and E. Winther (2015), “Kaufmännische Kompetenz im Ausbildungsverlauf – Befunde einer pseudolängsschnittlichen Studie [“Commercial Competence in the Course of Vocational Training - Findings of a Pseudo-longitudinal Study”]”, Empirische Pädagogik, Vol. 29/1, pp. 61-83.

[23] Klotz, V. and E. Winther (2012), “Kompetenzmessung in der kaufmännischen Berufsausbildung: Zwischen Prozessorientierung und Fachbezug: Eine Analyse der aktuellen Prüfungspraxis”, Berufs- und Wirtschaftspädagogik, ["Competence Measurement in Vocational Training in Commerce. Between Process Orientation and Field Relevance: Analysis of Current Examination Procedures"], pp. 1-16, http://www.bwpat.de/ausgabe22/klotz_winther_bwpat22.pd.

[10] KMK (1996, 2007, 2011, 2017), “Handreichung für die Erarbeitung von Rahmenlehrplänen der KMK für den berufsbezogenen Unterricht in der Berufsschule und ihre Abstimmung mit Ausbildungsordnungen des Bundes für anerkannte Ausbildungsberufe”, Ständige Konferenz der Kultusminister der Länder in der Bundesrepublik Deutschland, ["Handout for the Development of Framework Curricula of the KMK for Job-related Teaching in Vocational Schools and their Coordination with the Federal Training Regulations for Recognised Training Occupations"].

[4] Koeppen, K. et al. (2008), “Current issues in competence modeling and assessment”, Zeitschrift Für Psychologie, Vol. 2, pp. 61-73, https://doi.org/10.1027/0044-3409.216.2.61.

[35] Lievens, F. and F. Patterson (2011), “The validity and incremental validity of knowledge tests, low-fidelity simulations, and high-fidelity simulations for predicting job performance in advanced-level high-stakes selection”, Journal of Applied Psychology, Vol. 96/5, pp. 927-940, https://doi.org/10.1037/a0023496.

[31] McCarthy, J. (1989), “Artificial intelligence, logic and formalizing common sense”, in Thomason, R. (ed.), Philosophical Logic and Artificial Intelligence, Springer, Dordrecht, https://doi.org/10.1007/978-94-009-2448-2_6.

[32] Miller, T. (2019), “Explanation in artificial intelligence: Insights from the social sciences”, Artificial Intelligence, Vol. 267, pp. 1-38, https://doi.org/doi:10.1016/j.artint.2018.07.007.

[9] Nickolaus, R. (2018), “Kompetenzmodellierung in der beruflichen Bildung – eine Zwischenbilanz (Competency modeling in vocational training - an interim balance)”, in Schlicht, J. and U. Moschner (eds.), Berufliche Bildung an der Grenze zwischen Wirtschaft und Pädagogik, Springer VS, https://doi.org/10.1007/978-3-658-18548-0_14.

[21] PAL (2020), Jahresbericht 2019 [“Annual Report 2019”], Prüfungsaufgaben- und Lehrmittelentwicklungsstelle der IHK Region Stuttgart, https://www.stuttgart.ihk24.de/blueprint/servlet/resource/blob/4391022/cd49a986c985f428a9716ea48bb06c08/pal-2019-jahresbericht-data.pdf.

[27] PAL (2020), “PAL-Prüfungsbücher [“PAL-Examinations”]”, webpage, https://www.stuttgart.ihk24.de/pal/publikationen/buecher/pal-pruefungsbuecher-liste-3811836 (accessed on 8 September 2020).

[11] Roth, H. (1971), Pädagogische Anthropologie – Band 2. Entwicklung und Erziehung, ["Educational Anthropology - Volume 2. Development and Nurture"], Hermann Schroedel Verlag, Braunschweig.

[34] Roth, P., P. Bobko and L. McFarland (2005), “A meta-analytic analysis of work sample test validity: Updating and integrating some classic literature”, Personnel Psychology, Vol. 58/4, pp. 1009-1037, https://doi.org/10.1111/j.1744-6570.2005.00714.x.

[6] Rüschoff, B. (2019), Methoden der Kompetenzerfassung in der beruflichen Erstausbildung in Deutschland: Eine systematische Überblicksstudie, ["Competency Assessment Methods in Initial Vocational Training in Germany: A Systematic Overview Study"], Bundesinstitut für Berufsbildung, City.

[29] Schmidt, F. and J. Hunter (2004), “General mental ability in the world of work: Occupational attainment”, Journal of Personality and Social Psychology, Vol. 1, pp. 162–173, https://doi.org/10.1037/0022-3514.86.1.162.

[8] Weinert, F. (2001), Vergleichende Leistungsmessung in Schulen – eine umstrittene Selbstverständlichkeit [“Comparative Performance Measurement in Schools - a Controversial Matter of Course”], Weinert, F.E. (ed.), Publisher, City.

[25] Winther, E. (2011), “Kompetenzorientierte Assessments in der beruflichen Bildung–Am Beispiel der Ausbildung vonIndustriekaufleuten [“Competence-oriented Assessments in Vocational Training - Using the Example of the Training of Industrial Clerks”]”, Zeitschrift für Berufs- und Wirtschaftspädagogik, Vol. 107/1, pp. 33–54.

[24] Winther, E. and V. Klotz (2013), Measurement of vocational competences: An analysis of the structure and reliability of current assessment practices in economic domains, http://www.ervet-journal.com/content/5/1/2.

[26] Winther, E. and V. Klotz (2013), “Measurement of vocational competences: An analysis of the structure and reliability of current assessment practices in economic domains”, Empirical Research in Vocational Education & Training, Vol. 5/2, http://www.ervet-journal.com/content/5/1/2.

[5] Zlatkin-Troitschanskaia, O. and J. Seidel (2011), “Kompetenz und ihre Erfassung – das neue ’Theorie-Empirie Problem’ der empirischen Bildungsforschung? [“Competences and their Assessment – a New Problem between Theory and Empiricism in Empirical Education Research”]”, in Zlatkin-Troitschanskaia, O. and J. Seidel (eds.), Stationen empirischer Bildungsforschung: Traditionslinien und Perspektiven, Springer, VS Verlag für Sozialwissenschaften.

Note

← 1. Other forms of training include full-time school-based training. For a more detailed overview of the overall German VET system, see the German Federal Ministry of Education and Research (BMBF, 2019[37]) or the German Office for International Cooperation in Vocational Education and Training (GOVET; www.govet.international).